To edit the robots.txt file go to your manager in the Marketing > SEO > Robots.txt menu. Uncheck the box titled use automatic robots.txt file so that you can edit the file.

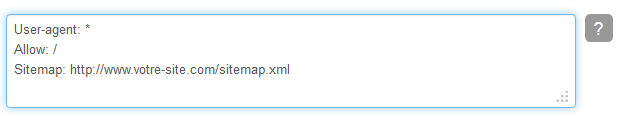

As we explained earlier, the file contains 3 lines, wherein the first 2 lines indicate all the search engines allowed to index your site. This file dedicated to bettering your sites SEO is created for you automatically from the moment you make your site. And will look like the following image.

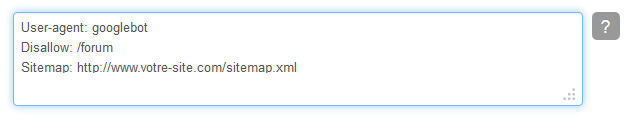

If you want to tell search engines specifically what to index, or inversely what not to index, you will then use the set protocols to edit this document. For example if you want Google not to index the forum of your site, you will write the following in the robot.txt field :

For more information about writing the protocols for search engine robots, we recommend that you read this guide by Wikipedia.